How to run a data governance readiness assessment: 20 survey questions

Poor data governance often shows up as conflicting reports, unclear ownership, delayed projects, audit findings, and decisions made on data that no one fully trusts. Gartner research puts the average annual cost of poor data quality at $12.9 million – a useful reminder that governance problems have an impact on your operations and, crucially, your finances.

Before you can improve governance, you need to know where your organization stands today. A data governance readiness assessment gives you that baseline. It offers a structured approach to reviewing current data management practices, identifying gaps, and understanding how ready your teams are for a more robust data governance framework.

In practice, that means looking at the key components of governance across ownership, data quality, policy, tooling, and culture. It also means turning those findings into something useful: a roadmap that supports regulatory compliance, operational efficiency, and more reliable decision making.

In this guide, you'll learn what a data governance readiness assessment involves, which dimensions it should examine, which data governance readiness survey questions to ask, and what to do once the results are in.

What is a data governance readiness assessment?

A data governance readiness assessment is a structured evaluation of how well an organization manages, protects, and gets value from its data.

At a practical level, it maps current data management practices against governance dimensions such as data ownership, data quality, policy, metadata management, access control, and training. The goal is to identify areas where your governance maturity is strong, where there are data quality gaps, and where accountability structures are missing. Instead of relying on assumptions, the assessment gives data leaders a baseline view of current performance across business units and data domains.

Governance is broader than compliance alone. A strong assessment looks at whether…

- Data assets have named owners

- Data definitions are consistent

- Sensitive data is classified correctly

- Data warehouse and surrounding infrastructure support access control and lineage

- Teams know how to apply governance policies in day-to-day work.

It's also not a one-time audit. A readiness assessment is most useful when treated as the initial phase of a broader governance program.

Many organizations structure their governance readiness assessment around established industry standards such as the DAMA Data Management Body of Knowledge, or DAMA-DMBOK, which DAMA describes as a globally recognized framework for data management principles, best practices, and essential functions.

Why data governance maturity matters

A readiness baseline matters because data governance implementation is expensive to get wrong. Without an assessment, teams often jump straight to tooling, write policies no one follows, or launch a data governance program without understanding where the real friction sits.

That creates two problems:

- You can spend heavily on catalogues, workflows, or monitoring without fixing foundational issues, such as unclear data ownership or inconsistent data definitions

- You can create a governance model that looks strong on paper but fails when applied to real data processes.

Experian found that 95% of organizations see negative impacts from poor data quality, affecting areas such as customer experience, business efficiency, and reputation.

Maturity also matters because regulatory compliance is becoming harder to treat as an afterthought. The GDPR sets requirements for how organizations collect, store, manage, and retain personal data, while the CCPA and its later amendments give California residents rights around deletion, correction, and the use of sensitive personal information.

Weak controls around data classification, retention, access, or accuracy can quickly become compliance risks. A documented readiness assessment will not make an organization compliant by itself, but it can help demonstrate that governance is being managed deliberately and reviewed systematically.

There's also a direct business case. Reliable data supports informed decisions, better operational metrics, stronger reporting, and greater efficiency across teams. Unreliable data does the opposite: it slows down approvals, creates duplicate work, undermines trust in dashboards, and makes it harder to align data strategy with business goals.

That same issue now extends into AI governance. Poor data quality can hold back AI adoption, as LLMs and generative AI tools learn from poor data quality and encounter inadequate risk controls.

Governance maturity is no longer only about keeping records tidy; it's part of how organizations protect business outcomes.

What a data governance survey should cover

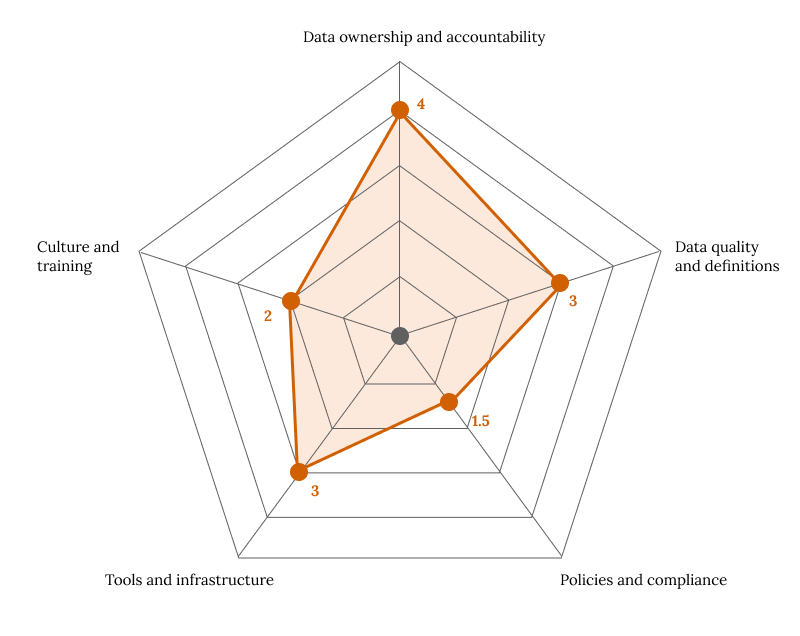

A good data governance survey doesn't try to measure everything at once. It focuses on the areas that most directly shape governance readiness. In most organizations, five dimensions give you the clearest picture:

- Data ownership and accountability

- Data quality and definitions

- Policies and compliance

- Tools and infrastructure

- Culture and training

Here they are in greater detail.

Data ownership and accountability

Governance starts with knowing who is responsible for what.

A survey should test whether critical data assets have assigned data owners and stewards, whether those roles are understood, and whether people know who makes decisions when issues arise. Without clear data ownership, even a well-designed data governance framework struggles to move from policy to action.

Data quality and definitions

Ownership only works if teams are aligned on what the data means.

This part of a data governance assessment should examine whether data is accurate, complete, timely, and used consistently across the organization.

Conflicting definitions of core metrics such as revenue, active users, or conversion rates are one of the most common governance failures because they make cross-functional reporting unreliable.

Policies and compliance

Once ownership and definitions are understood, the next question is whether rules exist and are enforced.

A data governance survey should cover policies for access, retention, classification, sharing, privacy, and incident response. The point isn't just whether a document exists, but whether teams can apply it in practice and whether regulatory requirements are reflected in everyday workflows.

Tools and infrastructure

Policy needs technical support. This part of the survey should test whether the organization has the infrastructure to support governance, including metadata management, catalogues, lineage visibility, role-based access controls, audit logs, and monitoring.

A mature governance program does not depend on tooling alone, but the absence of tooling often makes governance fragile and manual.

Culture and training

The final dimension is often the one that determines whether governance sticks.

Staff need to understand their responsibilities, know how to escalate issues, and see governance as part of good data management rather than a compliance checkbox.

Programs often fail because adoption lags behind policy, which is why training and shared habits matter as much as formal rules.

Together, these five areas give you a balanced view of governance readiness. The next step is to translate them into scoreable data governance readiness survey questions.

20 data governance readiness survey questions

Those five dimensions become useful when they produce honest, comparable responses. For most teams, the best format is a Likert-style rating scale from 1 to 5:

- 1 – Not in place

- 2 – Rarely in place

- 3 – Partially in place

- 4 – Mostly in place

- 5 – Fully embedded

Use the same rating scale for every question so you can compare results across teams, data domains, or time periods.

Most importantly, distribute the data governance survey to relevant stakeholders across operations, compliance, IT, analytics, and business teams. Governance gaps often appear in the difference between what leadership believes exists and what practitioners experience day to day.

Data ownership and accountability

- Does each critical data asset have a named owner responsible for its accuracy, availability, and appropriate use?

- Are data stewardship responsibilities documented for key systems, reports, and operational metrics?

- Do business units understand who approves changes to data definitions, access rules, and governance policies?

- Is there a clear escalation path when ownership disputes or governance issues arise within a data domain?

Data quality and definitions

- Do teams across the organization use consistent data definitions for shared metrics such as revenue, active users, or case volume?

- Is there a documented process for identifying, logging, and resolving data quality issues?

- Are data quality rules or validation checks applied to critical datasets before they are used for reporting or decision making?

- Can teams trace the source of quality problems across systems such as source applications, integration layers, or the data warehouse?

Policies and compliance

- Is there a documented data classification policy that distinguishes between public, internal, confidential, and sensitive data?

- Are data retention and deletion schedules defined and enforced in line with regulatory requirements and internal policy?

- Do staff know which governance policies apply to data access, sharing, privacy, and third-party use?

- Are governance controls reviewed regularly to address new compliance risks, audit findings, or changes in business strategy?

Tools and infrastructure

- Is there a data catalogue, metadata management process, or equivalent system that gives visibility into what data exists and where it lives?

- Can users see lineage for critical data assets, including where the data originated and how it was transformed?

- Are role-based access controls in place so employees can only access data relevant to their responsibilities?

- Is governance monitoring in place for activities such as access changes, policy exceptions, or unusual data movement?

Culture and training

- Have staff with data responsibilities received governance or data security training within the last 12 months?

- Do teams treat governance as part of normal data management practices rather than as a separate compliance task?

- Are governance expectations included in onboarding, handovers, or operating procedures for roles that handle important data?

- When a data quality, privacy, or access issue is identified, do employees know how to report it and what happens next?

You can also add a short free-text field after each section, asking respondents to name the biggest blocker in that area. Quantitative question scores help you measure progress. Short written responses help you develop targeted strategies and identify strengths that numbers alone can miss.

How to score and interpret your results

After collecting responses, calculate an average score for each question, first for each dimension, then for the assessment as a whole. You calculate both a high-level readiness score and a more useful view of where specific gaps exist.

A simple four-stage maturity model works well:

The pattern across dimensions matters as much as the total score.

Low results in data ownership and policy usually indicate foundational weaknesses. In those cases, buying more technology may not solve the problem because the organization has not yet settled on accountability or core rules.

Low scores in culture and training often mean governance policies exist but are not fully functioning. Low tooling scores may point to manual processes that make governance hard to sustain at scale.

Presenting the output visually helps. A radar chart is often the clearest way to compare the five dimensions because it shows relative strengths and weak points at a glance. For leadership teams, that kind of simple visual can make it easier to connect governance maturity with business objectives, mitigation plans, and budget decisions.

With that picture in place, the next step is deciding what to do after your assessment is complete and you've analyzed your scores.

What to do after your assessment

Scoring your assessment gives you a snapshot. The next step is turning that snapshot into action without trying to fix everything at once.

Start with the lowest-scoring dimension.

- If ownership is weak, focus on assigning data owners and documenting accountability structures.

- If policy is the problem, tighten your data governance policies around classification, retention, and access.

- If culture is lagging, prioritize training and communication.

A focused improvement sprint usually creates more momentum than a broad governance overhaul.

From there, build a governance roadmap with named owners, timelines, and measurable milestones. Each action should connect clearly to business goals, strategic objectives, or compliance needs.

With a roadmap in place, readiness assessment becomes part of a broader data governance implementation plan rather than a one-off review.

It also helps to share results with senior leadership in business terms. Connect the findings to the cost of poor data quality, the risk of unreliable reporting, or the operational drag created by weak data management processes. That framing makes it easier to secure buy-in, funding, and support from key stakeholders.

Finally, schedule the next assessment before the current one fades into the background. Running the same data governance survey again in six to twelve months lets you measure progress, compare teams, and see whether targeted strategies are working.

Run once, the survey is a snapshot. Run regularly, and it becomes a governance instrument in its own right.

Final thoughts

A data governance readiness assessment is not an optional precursor to a governance program; it's the starting point that makes the rest of the work credible.

When you know where the gaps are across data ownership, data quality, policy, tooling, and culture, you can move from aspiration to action. You can address data quality gaps, develop targeted strategies, and build a governance program that supports reliable data, stronger compliance, and better business outcomes.

Checkbox makes that process easier. You can use it to build and distribute a formal data governance survey, collect responses at scale, and analyze the results in a structured, secure workflow. Teams will be able to move from assessment to action faster, with clearer evidence and less admin overhead.

Contact us

Fill out this form and our team will respond to connect.

If you are a current Checkbox customer in need of support, please email us at support@checkbox.com for assistance.